Update: I fixed it by adding the following after the home plate renderer renders its first frame:

renderer.compile( scene, camera );

I can’t believe I fixed it myself because I’ve been tryin off and on for two weeks. I’ve read the WebGLRenderer documentation before and I thought I tried that. I guess not.

I’ll do my best to explain this problem I’m having. I create a shadermaterial with the following code:

let textures = [];

if ( shader == "dirt" ) {

textures = [

{ "file": "dirt.jpg", "t": null },

{ "file": "dirtDark.jpg", "t": null },

{ "file": "dirtN.jpg", "t": null }

];

for ( let l = 0; l < textures.length; l++ ) {

textures[l].t = new THREE.TextureLoader().load( "textures/" + textures[l].file );

textures[l].t.anisotropy = aF;

textures[l].t.wrapS = THREE.RepeatWrapping;

textures[l].t.wrapT = THREE.RepeatWrapping;

}

let standard = THREE.ShaderLib['physical'];

let theUniforms = THREE.UniformsUtils.merge( [

standard.uniforms,

{

"randomSeed": { value: Math.random() + Math.random() * Math.random() },

"map2" : { value: textures[1].t },

}

] );

let theMaterial = new THREE.ShaderMaterial({

lights: true,

uniforms: theUniforms,

defines: {

PHYSICAL: true,

USE_NORMALMAP_TANGENTSPACE: true,

USE_NORMALMAP_OBJECTSPACE: false,

USE_ANISOTROPY: true,

USE_CLEARCOAT: false,

USE_IRIDESCENCE: true,

USE_SHEEN: false,

USE_ENVMAP: false,

USE_SPECULAR: false

},

vertexShader: document.getElementById( 'dirtVS' ).textContent,

fragmentShader: document.getElementById( 'dirtFS' ).textContent

});

theMaterial.uniforms.map.value = textures[0].t;

theMaterial.map = textures[0].t;

theMaterial.map2 = textures[1].t;

theMaterial.uniforms.normalMap.value = textures[2].t;

theMaterial.normalMap = textures[2].t;

theMaterial.uniforms.normalScale.value = new THREE.Vector2( 1.4, 1.4 );

theMaterial.normalScale = new THREE.Vector2( 2.0, 2.0 );

// theMaterial.uniforms.diffuse.value = new THREE.Color( 0xBBBBBB );

theMaterial.uniforms.roughness.value = 1.0;

theMaterial.uniforms.metalness.value = 0.0;

theMaterial.uniforms.ior.value = 1.0;

theMaterial.uniforms.reflectivity.value = 0.0;

theMaterial.uniforms.iridescence.value = 0.0;

theMaterial.uniforms.iridescenceIOR.value = 1.0;

// theMaterial.uniforms.specularIntensity.value = 0.0;

// theMaterial.uniforms.specularColor.value = new THREE.Color( 0x000000 );

return theMaterial;

}

That texture is applied to a mesh on an imported GLTF. It works, BUT I have another renderer beside the main one, which points downward at homeplate from above it. That renderer only is activated once every swing, but as soon as it renders one frame, my shadermaterial does this:

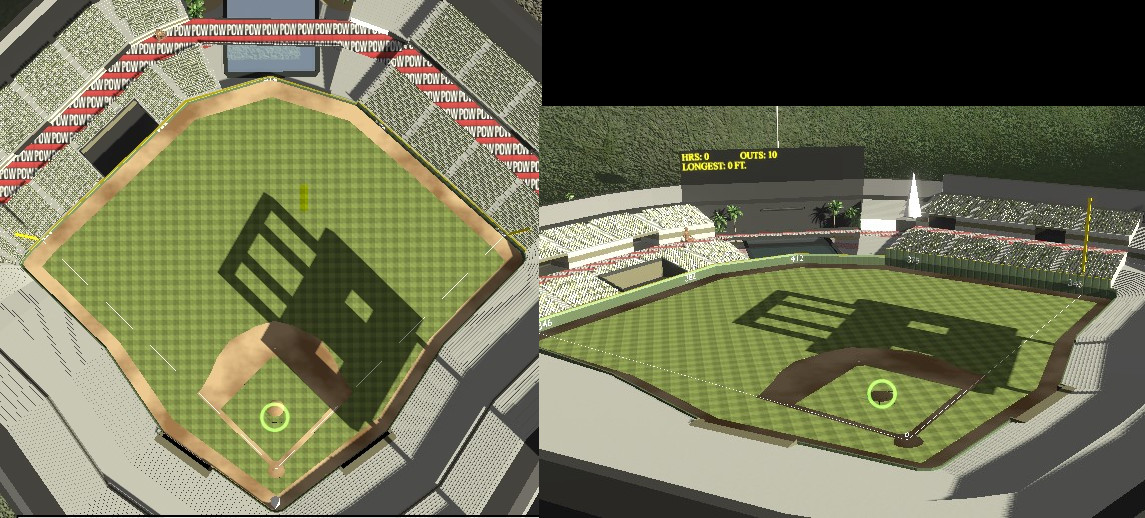

What I’m conveying with that image is the shadermaterial, which is the dirt, looks as it’s intended to look when the camera is pointing straight downward at it but at any other angle, it’s dark, like you see on the right. I don’t understand why that other renderer is causing the main renderer to do this.

I’m hopin someone has some input on this. I’ve given it a lot of thought before posting here.

Here’s another oddity about it all. I have a couple cubecameras and cuberendertargets for reflectiive material. There’s a statue and a pool in CF that use envmaps. When I update those, it corrects the shadermaterial issue completely, but I added a bloom pass to the game today and now even when I update the reflections, it doesn’t correct the shadermaterial problem.

If you wanna see it in action, the link is Home Run Derby.

Update: I removed all the reflections (cubecameras and cuberendertargets) and it didn’t change anything.

Update: It definitely has something to do with the separate renderer looking down at home plate , but I don’t understand why.